From Primitives to Platforms: Navigating the AWS AI/ML Stack as an Architect

A practical decision framework to choose between Bedrock, SageMaker, and pre-trained AI services

Hey there! I’m a seasoned Solution Architect with a strong track record of designing and implementing enterprise-grade solutions. I’m passionate about leveraging technology to solve complex business challenges, guiding organizations through digital transformations, and optimizing cloud and enterprise architectures. My journey has been driven by a deep curiosity for emerging technologies and a commitment to continuous learning.

On this space, I share insights on cloud computing, enterprise technologies, and modern software architecture. Whether it's deep dives into cloud-native solutions, best practices for scalable systems, or lessons from real-world implementations, my goal is to make complex topics approachable and actionable. I believe in fostering a culture of knowledge-sharing and collaboration to help professionals navigate the evolving tech landscape.

Beyond work, I love exploring new frameworks, experimenting with side projects, and engaging with the tech community. Writing is my way of giving back—breaking down intricate concepts, sharing practical solutions, and sparking meaningful discussions. Let’s connect, exchange ideas, and keep pushing the boundaries of innovation together!

We’ve all been there: A high-stakes system design kick-off where the requirement is simply, "We need to integrate AI to solve our data silo problem." Within minutes, the whiteboard is a mess of service icons. One engineer wants to call a serverless API; another wants a custom-trained model for precision; a third is asking if we can just turn on Amazon Q.

As an architect and platform engineer, your value in that meeting isn’t just knowing these services exist—it’s knowing the Stack Depth. Are we building the engine (Foundation), leveraging a specialist (Pre-trained), or deploying a finished interface (Application Layer)?

To cut through the noise, here is the mental model I use to categorize the AWS AI/ML landscape and the architectural notes that drive my final selection.

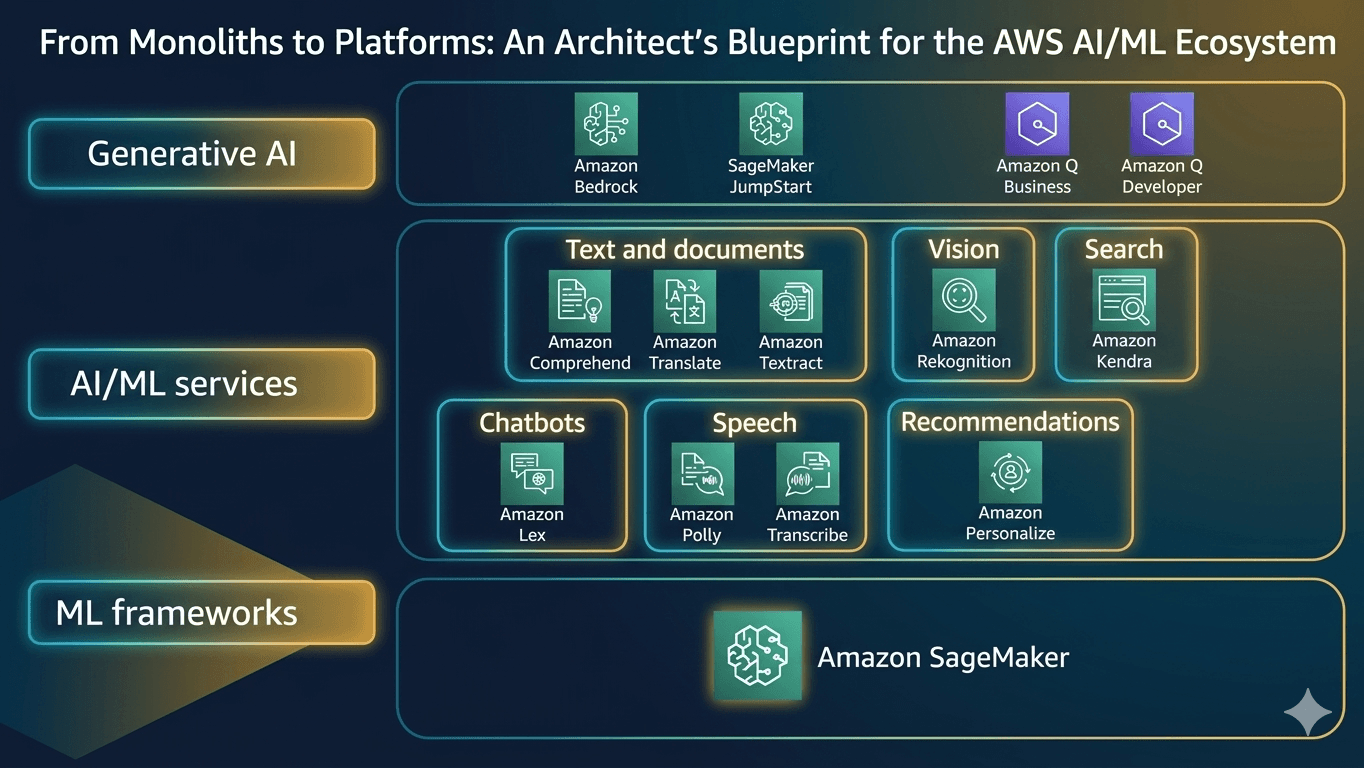

1. Categorizing by Intent: The Architectural Landscape

Instead of a flat list, I group the ecosystem by how we interact with the "intelligence" of the system:

- Foundation Model Platforms: The "engine room" where you decide whether to consume an LLM via API or host your own.

- Pre-trained AI Services: Purpose-built, "narrow" AI tools for specific tasks like OCR, translation, or vision.

- Generative AI Assistants: Higher-level interfaces designed for human interaction and enterprise knowledge.

- Insight & Search Engines: The retrieval layer that connects your proprietary data to your AI logic.

2. Core Service Deep-Dive: A Decision-Maker's List

Level 1: The Foundation ML & AI Platforms

This is the "Engine Room." These services provide the raw intelligence and the infrastructure required to host it.

Amazon SageMaker AI (The core ML Framework ) – A full-lifecycle workbench to build, train, and deploy custom models.

- Architect Note: Use this for deep engineering, when you have proprietary data that requires a model architecture Bedrock can't provide. It is the only choice when you need custom training loops or complex multi-model endpoints.

Amazon Bedrock – Serverless, API-based access to Foundation Models (FMs).

- Architect Note: The "SaaS" path to GenAI. It’s serverless and API-driven. This is your go-to for Time-to-Market and minimizing operational overhead—you pay for consumption, not idle instances.

SageMaker JumpStart – The "Acceleration Bridge." A managed hub for deploying open-source models (Llama, Mistral) on dedicated hardware.

- Architect Note: Use this when you need an open-source model (like Llama 3) that isn't on Bedrock yet, or when compliance requires you to host a model on dedicated instances within your own private VPC.

Level 2: Pre-trained "Plug-and-Play" AI Services

These are specialized, single-purpose tools. They are "narrow" AI—highly efficient at one task and usually cheaper than calling a general-purpose LLM.

Language, Text

Amazon Comprehend – NLP for sentiment, entity extraction, and PII redaction.

- Architect Note: Often cheaper and faster for simple text analysis than calling a full LLM on Bedrock.

Amazon Translate – Neural Machine Translation (NMT).

- Architect Note: Strictly Text-to-Text. Use this for real-time localization where latency and neural accuracy are the primary constraints.

Amazon Textract – Intelligent OCR that understands forms and tables.

- Architect Note: Moving beyond basic OCR. Use this when you need to preserve the relational structure of data (e.g., reading a table in a PDF directly into a database).

Audio & Conversational

Amazon Lex - A service for building conversational interfaces (chatbots) using voice and text.

- Architect Note: The logic layer for chatbots. It handles the "Intent" and "Slot" fulfillment. Think of it as the brains behind the conversational flow, often backed by Lambda for fulfillment.

Amazon Polly - Text-to-Speech (TTS). Turns text into lifelike human speech.

- Architect Note: It provides high-fidelity, lifelike voices. Use SSML tags for granular control over pronunciation and prosody.

Amazon Transcribe - An automatic speech recognition (ASR) that converts spoken audio into text.

- Architect Note: Essential for building searchable archives of call recordings or generating real-time closed captions.

Video, Search & Personalization

Amazon Rekognition – Computer vision. Highly scalable for image and video analysis.

- Architect Note: Key for safety compliance (PPE detection) or content moderation without building custom vision models.

Amazon Kendra – An intelligent, semantic search engine.

- Architect Note: The "Librarian." It focuses on finding the exact source document across siloed data.

Amazon Personalize – Real-time recommendation engine based on user behavior.

- Architect Note: Highly specialized. Don't try to build this with a general LLM; use this for retail/media engagement.

Level 3: Generative AI Assistants (The Application Layer)

Amazon Q Business – A fully managed, Generative AI–powered assistant for your enterprise data.

- Architect Note: This is "RAG-in-a-box." It connects to 40+ enterprise data sources (S3, Salesforce, Microsoft 365) with built-in security.

Amazon Q Developer – An AI assistant designed specifically for the Software Development Lifecycle (SDLC).

- Architect Note: It lives in your IDE and the AWS Console to help with code generation, testing, and even upgrading legacy Java versions.

3. The Architect’s Decision Matrix: Bedrock vs. JumpStart vs. SageMaker AI

In design reviews, this is the most common fork in the road. I break it down by Infrastructure Responsibility:

| Feature | Amazon Bedrock | SageMaker JumpStart | SageMaker AI |

|---|---|---|---|

| Operational Effort | Zero. Serverless. | Low. Managed instances. | High. Full infrastructure. |

| Scaling | Token-based (Scale-out) | Instance-based (Scale-up) | Custom (Full Control) |

| Environment | Public/Shared API | Private VPC | Custom VPC/Container |

| Best For... | Rapid GenAI Prototyping | Private Open-Source Models | Ground-up ML Development |

The Architect’s Framework:

- Bedrock First: If a model on Bedrock meets 80% of your needs, use it. The overhead of hosting your own is rarely worth the 20% gain.

- JumpStart Second: If you need an open-source model with "private" compute or specific fine-tuning that isn't available via Bedrock's API.

- SageMaker AI Last: Only when you are building something truly custom or doing traditional ML that doesn't fit the "Foundation Model" mold.

4. Orchestration: Avoiding the "Translation Trap"

A common design flaw is assuming a service does more than its narrow purpose. For example, developers often assume Amazon Polly (Text-to-Speech) will translate English to Spanish. It won't.

The Pipeline Mindset:

As a Platform Engineer, you must orchestrate the data flow. Here is the canonical architecture for a multilingual voice processor:

- Ingest: S3 Event Trigger → AWS Lambda.

- Transcription: Transcribe (Speech → Source Text).

- Translation: Translate (Source Text →Target Text).

- Synthesis: Polly (Target Text → Target Audio).

- Output: Store in S3 and notify via SNS/SQS.

The Warning: If you skip Step 3 and just send English text to a Spanish Polly voice, you’ll get an "English-accented Spanish" that sounds like gibberish to native speakers. Context matters.

5. The "Corporate Data" Confusion: Kendra vs. Amazon Q

I often see teams struggle to differentiate these because both target internal data. However, the architectural intent is fundamentally different:

Amazon Kendra (The Specialist): It’s a Search Engine. It helps users find documents. Use it when the requirement is a ranked list of accurate source links.

Amazon Q Business (The Analyst): It’s a Conversational Assistant. It synthesizes the answer. Use it when the user wants a summarized answer instead of a list of files to read.

Architectural Insight: These are not competitors; they are partners. You can actually use your existing Kendra Index as the data source for Amazon Q Business.

Final Thoughts: Think in Systems, Not Services

AWS AI services are powerful, but they are just primitives. As architects, we shouldn't fall in love with the service name; we should fall in love with the data flow.

The real engineering happens in the "arrows" between the boxes. Whether you are using EventBridge to trigger an image analysis or Step Functions to orchestrate a complex LLM workflow, your goal is to build a system that is resilient, observable, and cost-optimized.

The big question for your next design review: Are you building a custom "creation platform" with SageMaker, or a "consumption layer" with Bedrock? The answer will define your team's velocity for the next year.

What’s your "Aha!" moment with the AWS AI stack? I'm curious to hear how you're handling service overlaps in your production environments. Let's discuss in the comments!